New Feature: Review Labels

Sophisticated machine learning projects can quickly grow in size, encompassing thousands of images to annotate and a large number of people working on a single project simultaneously. As a project manager, it is often hard to keep track of the quality of the annotations and to ensure that all images are labeled correctly, especially within big data projects. Providing high-quality label data is a fundamental requirement for achieving good ML models. Therefore, it is crucial to continuously review the annotated images and to give feedback to your labeling team. That’s where our new feature comes into play: the option to Review Labels in your projects!

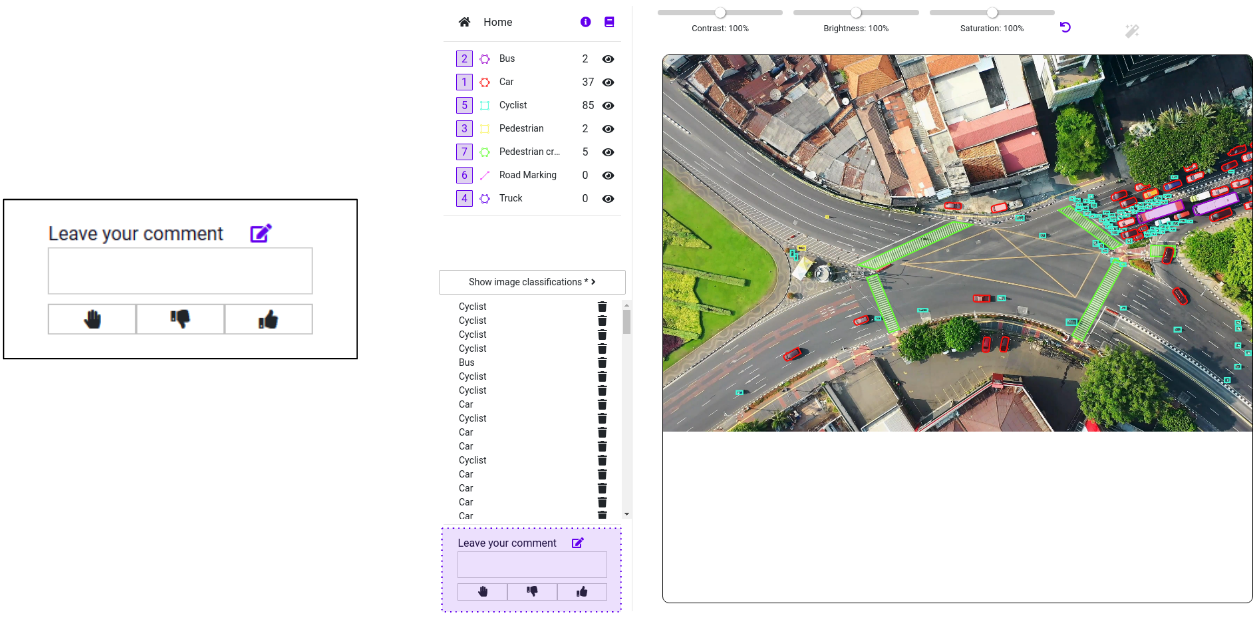

The Review mode is a powerful feature of DataGym that helps you to raise the quality of your labeled data. You can now use DataGym to inspect the images after they have been annotated by your team.

Label Mode: Use the Label Mode and the Workspace to inspect the labeled images. Depending on your evaluation, you can either approve an annotated image or reject it. A rejected image will be re-assigned to a member of your team. By using the comment box, you can also leave hints to your team on how to correctly annotate the images. This feedback loop is a reliable way to improve the performance of your team during the labeling process. For a quick solution, you can also fix missing or misplaced labels by yourself.

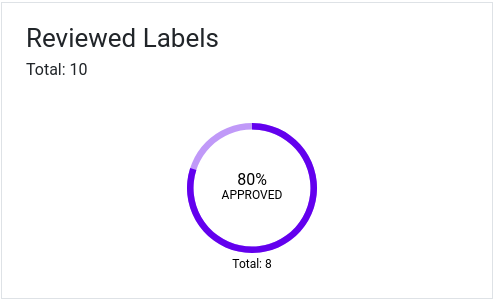

Measure Performance: To keep track of the overall team performance, you can simply observe the dashboard of your project within DataGym. The performance chart shows how many labeled images you have approved during the review process.

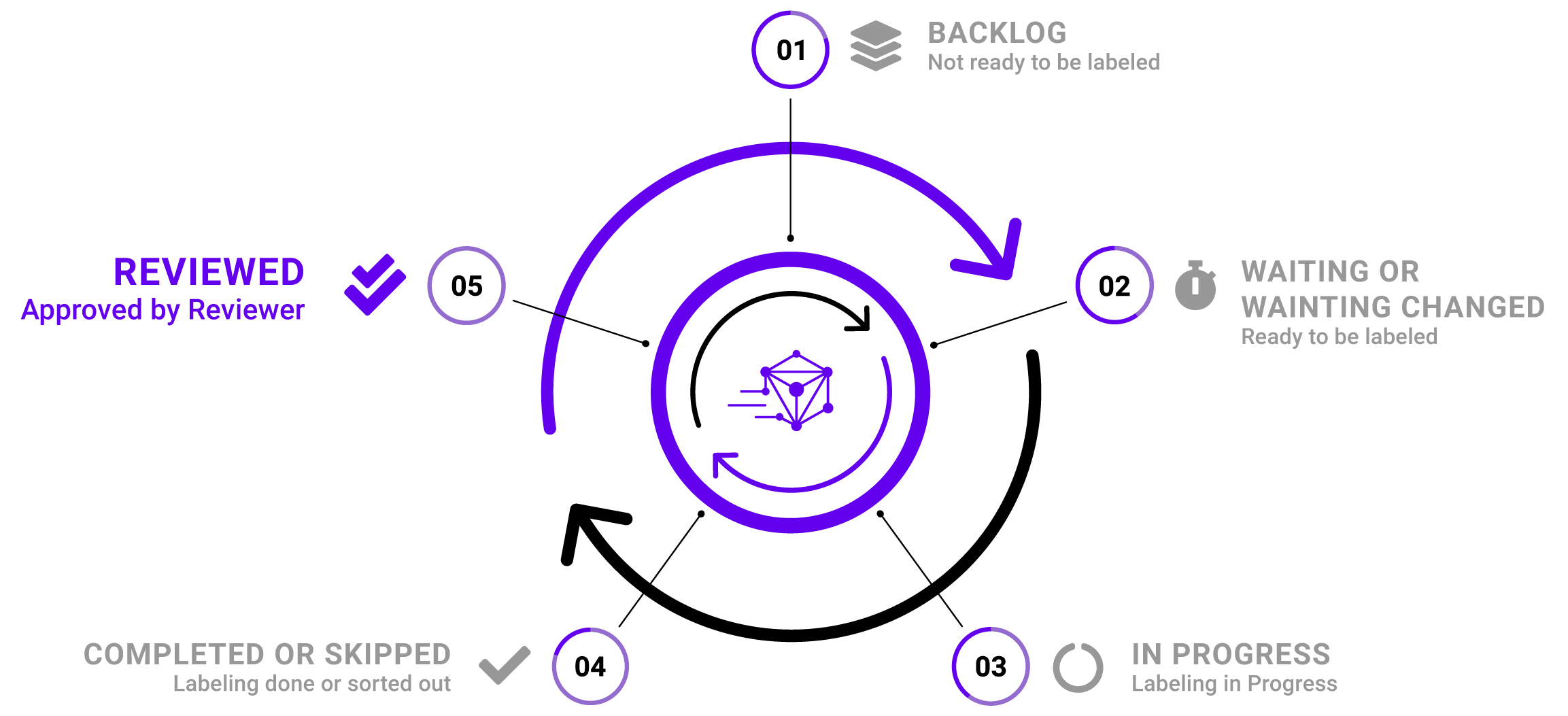

Tasks: The Review feature also extends our Task Lifecycle by an optional state: REVIEWED. You can review the annotated images as soon as your team completed a labeling task. In case you reject a completed labeling task, it will be moved back to WAITING_CHANGED. Thereby, the assigned labeler can now fix misplaced or missing labels.

Try out the new Review feature in your own projects! Visit our documentation to learn how to enable the Review Mode in a few simple steps. If you don’t have a DataGym account yet, just sign-up here – it’s free!